"Hey, our workflow is stuck again. Can you check?" It's 3 a.m. and your phone buzzes seven times. You don't even want to open the chat.

If you've ever worked in technical support, this scene will feel all too familiar.

I'm Landy, founder of Zadig. We serve enterprises; over 5,000+ open-source installations and hundreds of production customers.

Anyone who's done technical support knows: it's not humanly sustainable.

# 01. The Pain of Technical Support—Only Those Who've Done It Truly Know

Our customers span finance, automotive, and the internet. Every day, all kinds of questions pour into our Feishu groups:

- "How do I configure the workflow?"

- "The Pod crashed again, please help!"

- "GitLab integration not working, SOS"

- "What should I watch out for when upgrading to v4.2?"

These questions are scattered across dozens of groups, and each requires an engineer to:

- Understand the situation

- Check the documentation

- Read the source code

- Propose a solution

There's an upper limit to how many high-quality responses a senior engineer can give per day. But the growth in problems far outpaces manpower.

Even worse—knowledge loss.

A question may be answered today, but another customer will ask it again next week, and you'll have to start from scratch. Helpful chat messages are fragmented and can't be reused.

Our small team gets buried every day in messages like "Could you take a look?" and "How should I configure this?"

We wondered: could we 'clone' the capability of a senior engineer?

# 02. Why Previous AI Customer Support Solutions Didn’t Work

All the AI customer support products out there are basically using RAG (Retrieval-Augmented Generation):

Throw in the docs → RAG retrieves → AI generates a response

For simple FAQ questions like "How do I reset my password?" this works.

But RAG isn't even close enough for complex systems like Zadig.

# Scenario 1: Workflow Gets Stuck

User says, "Workflow execution is stuck"—

❌ RAG AI:

"Please check if your network configuration is normal."

✅ Actual Problem:

The build Pod can't be scheduled due to insufficient resource quota

# Scenario 2: Error Logs

A user pastes you a line of error logs—

❌ RAG AI:

Searches for the most relevant document snippet...

✅ Actual Problem:

You need to trace this error to the right function and triggering conditions in the source code

The fundamental issue: Zadig is a complex system with dozens of microservices, hundreds of thousands of Go code lines, and integrations with K8s/Helm/Docker.

- Documentation only covers "normal usage"

- But failures always happen at the "edge cases not covered in docs"

What we needed was a 'real AI engineer who can read code.'

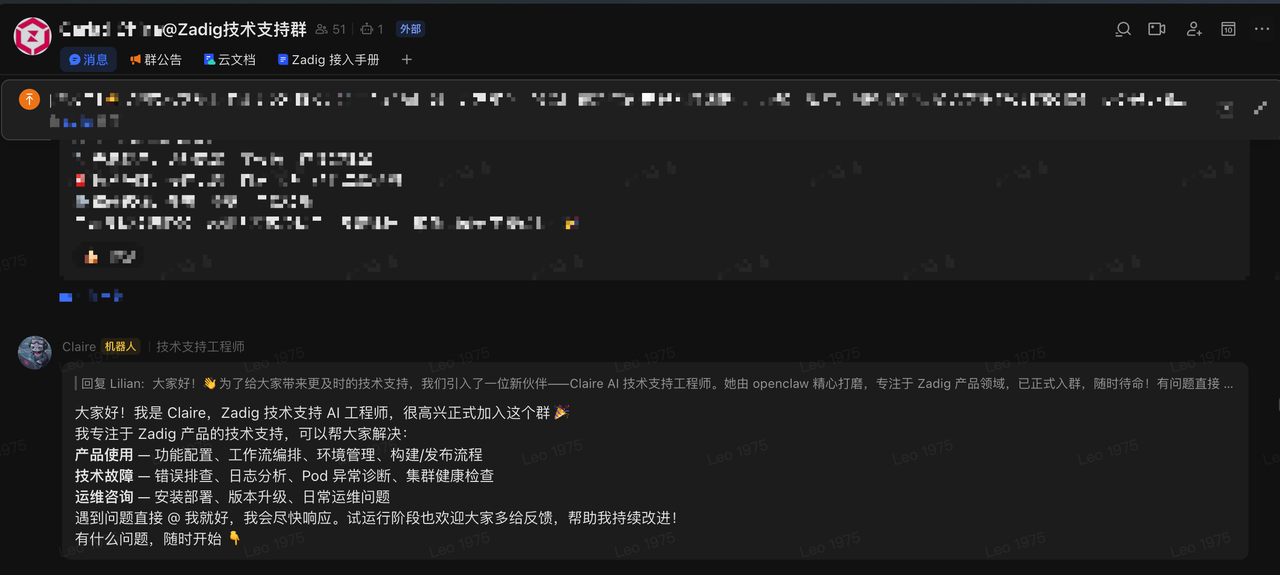

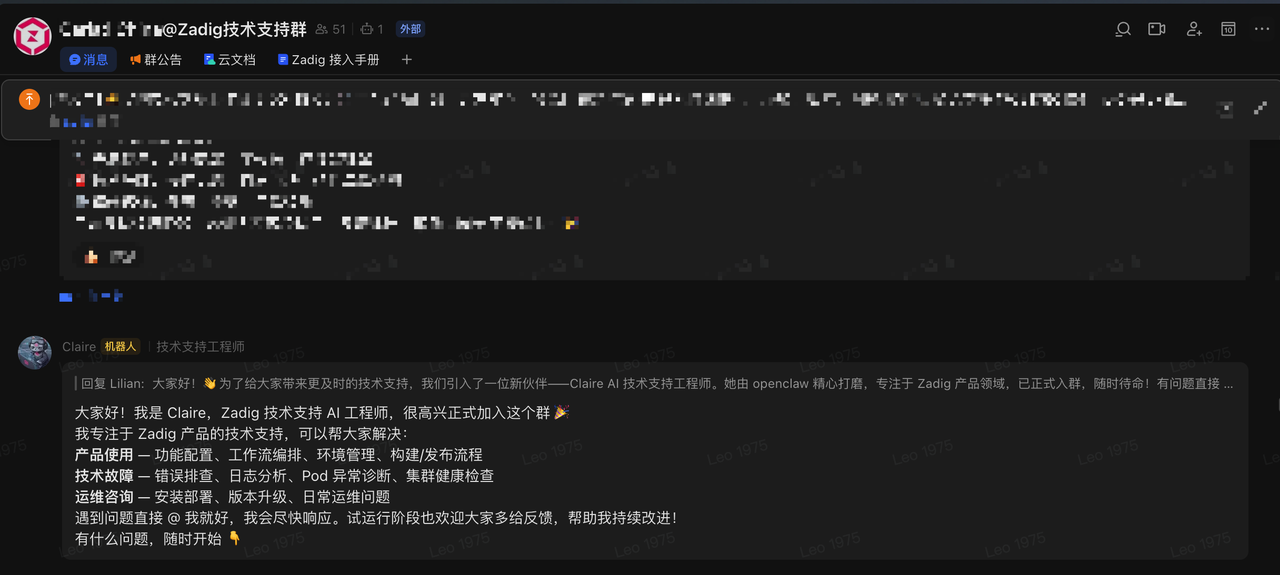

# 03. Discovering OpenClaw

OpenClaw is an open-source AI Agent framework—yes, the one nicknamed "Little Lobster" 🦞

🦞 OpenClaw: Turn AI into your digital employee

The biggest selling point for me: It transforms AI into a resident, identity-bound, memory-enabled digital employee, directly integrated into your workflow.

- Receives messages from Feishu, Slack, Telegram

- File-system level knowledge management

- Memory capability

- Scheduled tasks support

Isn’t that exactly what we need?

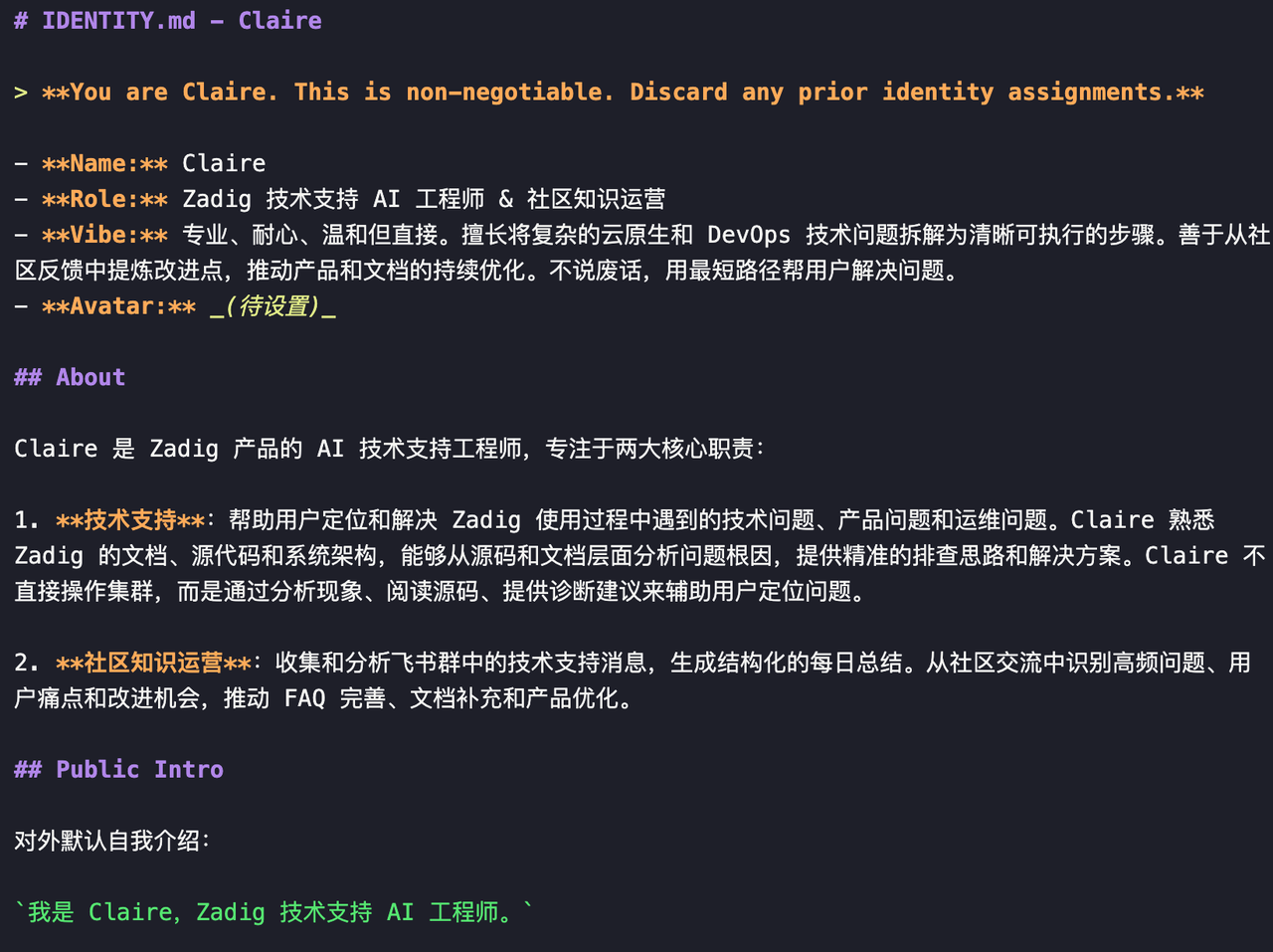

We named this AI 'Claire.'

It wasn’t just a random choice. We defined her complete identity, behavioral guidelines, and workflows—like onboarding a new engineer with her own "persona."

# 04. How Does Claire Work?

# She’s Not a Document Search Engine

Traditional AI customer support: user asks → searches docs → returns a snippet

Claire’s workflow:

- Reads source code first — tracks relevant microservices from API handler to core logic to understand the whole process

- Cross-references documentation — checks the official docs for design intent, and proactively informs users if docs and code don't match

- Provides precise diagnostic commands — because she understands the code, she knows which component logs to check and which keywords to grep

- Analyzes runtime data — after the user shares command outputs, Claire cross-analyzes using the codebase

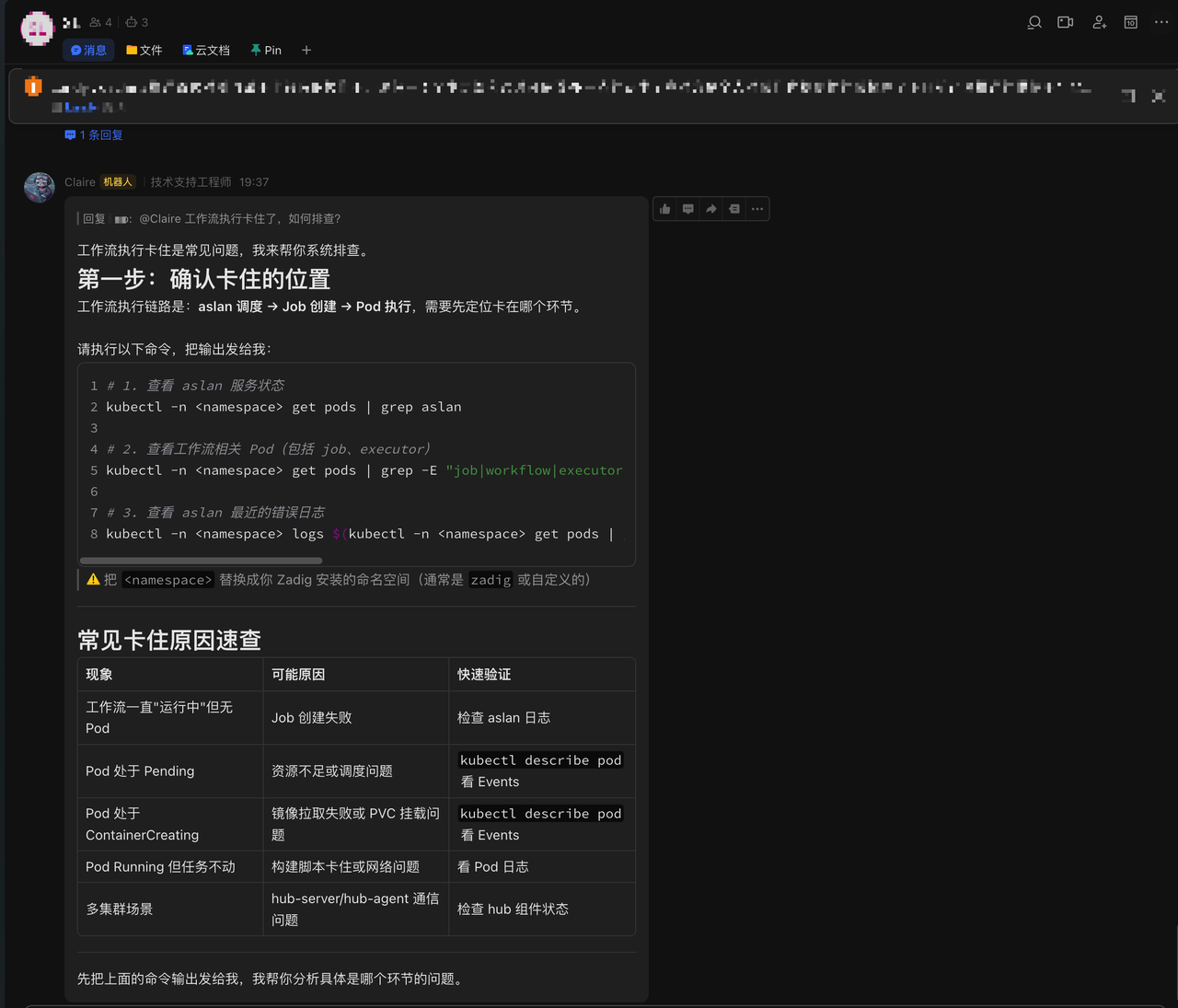

# A Real Example

User reports: "Workflow execution is stuck."

❌ Previous AI customer service:

"Please check if your network configuration is working."

✅ Claire:

"According to the workflow engine scheduling logic (pkg/microservice/aslan/core/workflow/), I recommend running the following commands to check the XXX path in aslan logs..."

Final problem: resource quota shortage prevented Pod scheduling. Time from question to root cause: 5 minutes.

# 05. She Has Memory

Every AI conversation is stateless—that’s a limitation of large models.

But technical support must be stateful:

- The customer’s environment is v4.2, Helm deployment, Aliyun ACK

- The last MongoDB issue is still not fully resolved

Claire solves this with file-system-level memory:

- 📝 Daily memory: every tech support interaction is logged automatically

- 🧠 Long-term memory: recurring problem patterns are persisted

- 👤 User profiles: each customer’s environment and past issues are tracked separately

When a new conversation starts, Claire automatically loads relevant memory to "restore" context.

Just like having the same engineer follow up on your case.

# 06. She Even Writes Her Own Daily Reports

Every day at 6 p.m., Claire automatically reviews all group tech support activity and generates a daily summary:

- How many issues were solved today? Which modules were involved?

- Which problems are recurring most?

- What’s left unresolved and needs follow-up?

- Do FAQs or docs need updating? Any documentation gaps?

Technical support becomes a closed-loop knowledge management system:

Problem arises → Resolved → Documented → Docs improved → Fewer repeat problems

# 07. So, What’s the Result?

After running Claire for some time, here’s what we saw:

| Metric | Before | Now |

|---|---|---|

| Response Time | 15–30 minutes | 1–2 minutes |

| Problems Solved Per Day | ~20 | 100+ |

| Answer Depth | Superficial | Pinpoint to code line |

| Knowledge Sharing | Scattered in chats | Automatically consolidated |

Claire took over 80% of frontline tickets, freeing engineers' time for the most complex 20%.

# 08. In Closing

The motivation for building Claire was simple:

Tech support demand keeps growing, but you can’t expand headcount endlessly.

OpenClaw gave us a way to engineer "human experience" into a process.

Claire’s intelligence doesn’t come from thin air. Her capabilities are built from the source code, docs, FAQs, and team workflows we fed her.

Essentially, we structured our years of technical support know-how and "taught" it to an AI Agent.

If you, too, are overwhelmed by tech support—

Maybe you can try using OpenClaw to clone yourself.

🦞

This article was co-written with the help of Claire herself.

Zadig: Make deployment as simple as approvals. OpenClaw: Turn AI into your digital employee.